Greenpeace UK is testing dozens of form workflows, emails, monthly donor asks, Facebook lead generation and more. Here’s a look at the program, what’s being tested, and how it is spreading across the organisation.

Greenpeace UK, one of the largest Greenpeace offices, has for years used direct dialogue/street canvasses and telephone marketing to bring in (and renew) donors. Renewing these supporters online is a challenge. So is turning online audiences into action takers and donors. It was clear that, over the long haul, the group needed new ways of engaging and converting people to activists and donors.

Facing similar pressures, many organisations might bring together a cross-department team and outside strategists to map out a new long-term approach. This may work wonders. Or it may not. And finding out could mean months (or years) of meetings, planning and development.

Don’t Build It. Test It.

Greenpeace UK chose to create a rapid optimisation and testing program. The need to get more of the online community to act, boost new donor numbers and find stable giving sources drove a willingness to invest in testing.

A small team from different departments is leading the process. Forward Action, a consulting and technical partner with optimisation/testing experience was brought on board. In addition, an internal testing group from every department is sharing learning and helping build a sustainable experimentation and optimisation culture.

First year results are encouraging and touching more parts of the organisation than expected.

Facebook has become a leading source of new email subscribers and fundraising leads with up to 5,000 leads per week. Optimising a post-share donation page has resulting in a 500% donation rate increase. Testing is now happening in nearly all phases of online advocacy, mobilisation and fundraising. A small change to the 2016 year-end online member survey, for instance, increased survey responses 86% over 2015.

Not Easy. But Not that Hard.

A/B testing is not new (and there’s no shortage of articles about it in the marketing and nonprofitworlds). But ongoing testing efforts often fail for lack of experience and resources.

Alex Lloyd of Forward Action notes that Greenpeace UK faced typical challenges as they got going. “The tools they were using [Blue State Digital] were hard to develop with so testing was simply too hard for internal teams to pull off. They needed to be able to write code.” This is common problem–and a big obstacle to testing in many smaller organisations.

Lloyd also points to culture. There was no ongoing testing program. The organisation had fallen out of the habit of testing things with nobody to take the lead.

Mal Chadwick of Greenpeace UK helps lead the testing program. Chadwick notes that testing wasn’t an added burden because he was given time to help run the effort. Time is not optional: “Good testing is 95% process,” Chadwick says. He added that testing “breaks down when you don’t have good process and someone to drive it forward.” Distributing responsibility for testing around the organisation doesn’t work as well. Chadwick and Lloyd both point to this as one reason past testing efforts didn’t last.

What’s Been Tested (and Learned)

Greenpeace UK has an engaged social media audience, including over 600,000 Facebook followers. “We wanted to use our brand as a hook to bring people in,” said Jen Douglas, a Greenpeace UK marketing executive.

“Bearing Witness is a core value of Greenpeace,” Douglas told us. “We wanted to engage people around the idea of bearing witness,” she said, to help Greenpeace UK better, and continually, connect people to actions, opportunities to share actions with others and (of course) fundraising.

Facebook now accounts for 5,000 fundraising leads per week. Some people have taken action in the past and are on the email list. Many are new. Ongoing testing is improving fundraising results from the Facebook audience, including rapid growth of direct debit (monthly giving) supporters.

A campaign focused on orangutan protection is an example of the “bearing witness” approach. The audience supports protection and Greenpeace UK has a long history of protecting orangutans and their habitat, including fighting rainforest destruction.

Using Optimizely (the primary testing platform used by Greenpeace UK), the team created an orangutan protection action and tested several elements to optimise the approach. An example of the action page is below:

This optimised petition is an example of a “Bearing Witness” campaign created by Greenpeace UK to engage Facebook fans and other supporters in their database.

The petition is simpler and more focused than past petitions but the greatest improvements come after signing. Testing focused on presenting action options as choices to prevent people from simply closing the window. (After all, how many times have you seen a pop-up window after clicking submit and just closed it?)

Here is an example of the page people see after signing the petition. It includes an option to share or skip. This is presented in two screenshots of the same page:

The top portion of an optimised share ask after signing a petition.

Optimised petition action gives people a chance to say “Skip” when asked to share the petition. Testing showed increased engagement with the page when people were given a chance to say “Skip.”

This boosted Facebook sharing by up to 60% but the bigger impact was on fundraising. Clicking Skip loads a monthly giving donation form:

Petition signers had the option to skip sharing and were presented with a donation form. This increased donations from Facebook supporters (largely new donors).

Greenpeace UK’s Douglas notes that this simpler three step approach improves the user journey. The process optimises for user choice instead of giving them a clear option to quit. Overall, online signups are up 25% and actions are seeing as much as a 60% share rate.

Another tested change, placement of a donation button, helped the page raise four times what it cost to advertise the campaign on Facebook. Lloyd’s team made this possible by providing the technical ability needed to recode Blue State Digital forms across several tests. Staff entered the process doubting the impact of their previous post-action landing page but didn’t have the ability to code and test changes. Here’s what a similar post-action page looked like before:

This is an example of a thank you page presented to petition signers before optimisation and testing. The new format breaks up the options so that they load one by one based on user interaction.

Hmm. Which Action Should I Take?

One important takeaway: Break user options up into steps. Don’t present everything at once, as the original landing page above did.

A corollary: Empower people by giving them a clear actions to choose from rather than just closing the page. The difference here is important. Instead of the user asking themselves “should I act or not?” they are asking “which action should I take?”

Forward Action’s Lloyd observes that more people now take the first action than took any action in prior versions of the post-petition page. Many people are taking a second action as well, something not even possible in the past.

This led the team to test a simple “Yes” or “No” choice on the post-petition share screen. Post-action shares rose 30% and donations were up 50%. Here’s an example of the Yes/No screen in a rainforest campaign action (clicking No leads to a donation form):

Greenpeace UK tested a simple Yes / No ask to share after taking action. Providing a clear choice (and removing other options) increased actions in general, raised shares and led to more donations.

What’s Next

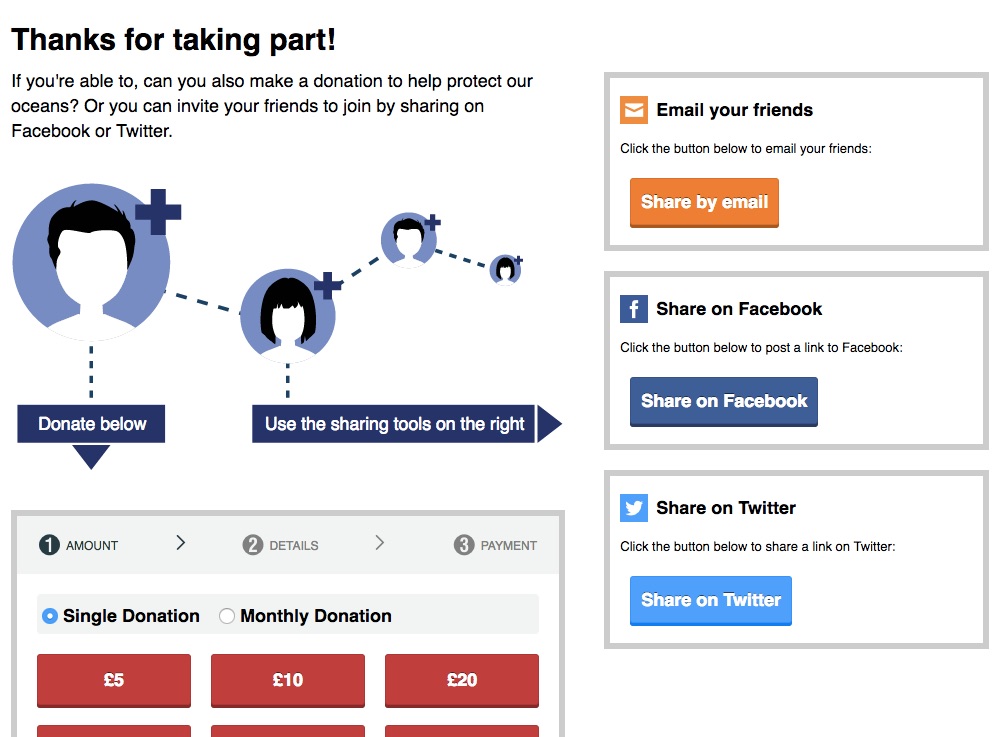

Chadwick and Douglas are continuing Greenpeace UK’s partnership with Forward Action in an effort to introduce changes to forms and more testing around the organisation. Douglas focuses on testing to increase sustainer giving. Tests are asking for smaller monthly gifts instead of a single donation. They’re also testing “interruptions” to the standard share or donate flow to ask for monthly donations. Here’s a recent monthly giving screen which has no zero gift, close or one time gift option:

An example of a monthly giving donation test that “interrupts” the standard online action process.

Results are encouraging and the fundraising team has begun testing direct debit/sustainer asks in place of usual fundraising emails. “One thing we’ve learned,” Douglas says, “is that an email arc [for direct debit] has been super helpful.” Results of a two or three email series have been much better than a single “convert to monthly giving” ask. The process is changing the scale of the monthly giving program. “Our original direct debit conversion goal for 2016 was 450 people. We did that with one email last year,” Douglas reports.

The testing team is now in a position to support similar innovation across the organisation. One key, according to Chadwick, is not to tell people to test things but to support ideas and be able to make it happen. In many cases, Chadwick was able to test an email or petition change by showing up at campaign team meetings, hearing what was planned for the week, and then offering to set up the email/landing page sequence.

Offering his time in this way has made it much easier for campaigns to agree to tests and opened staff up to new ideas. “We can do a lot more opportunity spotting and tying into the news,” he says, which generates more engaging campaigns. The side effect of a testing culture is not just better performing forms, but a growing organisational awareness of – and desire to benefit from – the ability to quickly test, learn and improve.

Categories:

testing, learning and iteration